Gradient Flow #23: AI Liabilities, Data Quality, Robust Language Models

This edition has 408 words which will take you about 2 minutes to read.

“It is always easier to destroy a complex system than to selectively alter it.” - Roby James.

Data Exchange podcast

Improving the robustness of natural language applications In recent years adversarial attacks against computer vision models have been covered in numerous media articles. Similar attacks have surfaced for NLP models and there have been a series of research projects dedicated to generating adversarial examples and defending against these adversaries. Jack Morris is co-creator of TextAttack, an open source framework for adversarial attacks, data augmentation, and adversarial training in NLP.

End-to-end deep learning models for speech applications Yishay Carmiel is an AI Leader at Avaya, a large company focused on digital communications. In this episode we discussed recent developments in speech technologies, specifically the rise of end-to-end speech models for ASR (automatic speech recognition).

[Image by Aaron Washington.]

Machine Learning tools and infrastructure

Navigate the road to Responsible AI In this new post, I use three recent surveys and an ethnographic study to assemble a primer summarizing how companies are approaching Responsible AI today and what they aspire to do in the future.

SDV Synthetic data generation has been in the news because of things like deepfakes and the need for training data in computer vision applications. But what about structured and semi-structured data? This new library from MIT takes single-table, multi-table and time-series datasets, and is able to generate synthetic data with the same format and statistical distribution. Developers can use this for testing the robustness of all sorts of applications - not just machine learning models, but software applications that consume data.

The quest for high-quality data I’m resurfacing this 2019 overview I wrote with Ihab Ilyas because the topic of data quality has recently been popping up on my radar. There are now a few startups, as well as open source projects (Apache Griffin, Deequ) that address aspects of this super important area.

Free Virtual Conferences

Understanding and Avoiding AI Liabilities in Practice Failures are inevitable in complex systems like AI. In this December 15th webinar, Andrew Burt, Managing Partner at BNH.ai, explains how you can mitigate liabilities arising from AI.

Work and Hiring

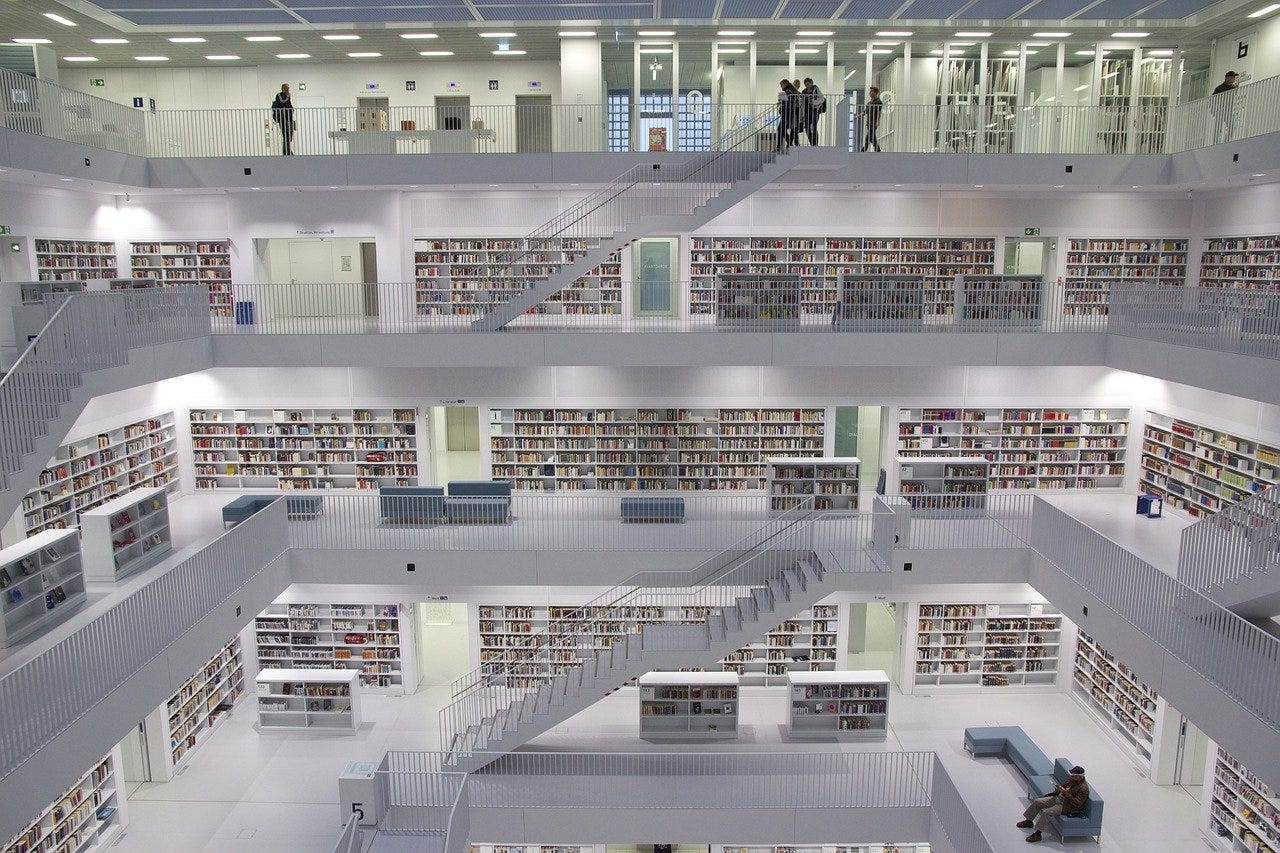

[Image: Stuttgart Library by Jörg Fiedler.]

Recommendations

The open-source differential privacy library from Google Here’s a good overview of differential privacy (DF) via a recent interview with two of the people central to open sourcing Google’s DF library.

Active Driving Assistance Systems: Test Results and Design Recommendations A November summary from Consumer Reports.

If you enjoyed this newsletter please support our work by encouraging your friends and colleagues to subscribe:

Ben Lorica edits the Gradient Flow newsletter. He is co-chair of the Ray Summit, chair of the NLP Summit, and host of the Data Exchange podcast. You can follow him on Twitter @BigData. This newsletter is produced by Gradient Flow.