AI is describing your competitors better than you. Here's why.

The AI Visibility Playbook: Surviving the Shift from Search to Synthesis

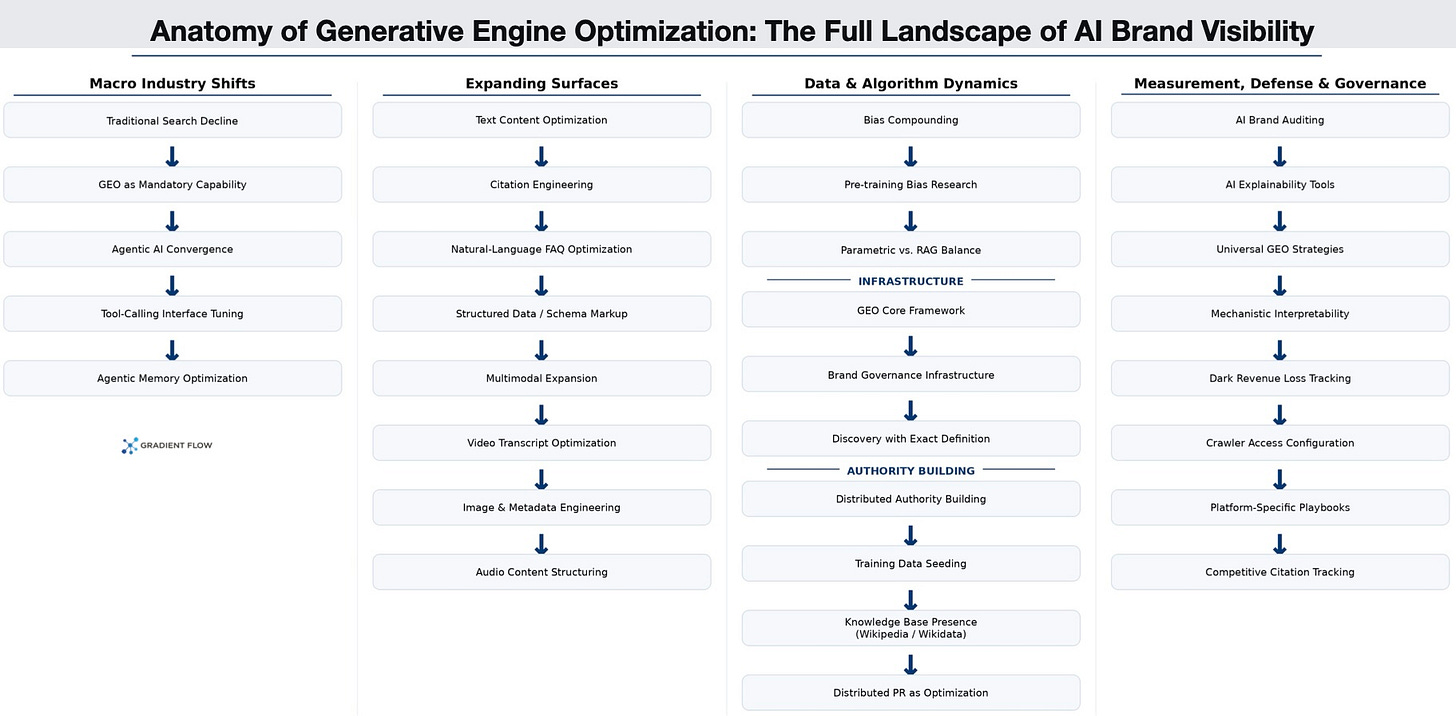

More people are turning to AI chatbots instead of traditional search engines to find information online. Even Google now displays an AI Overview at the top of many search results to summarize answers directly. When search engines ruled the internet, search engine optimization (SEO) served as the primary tool for companies to manage their brand reputation and ensure digital visibility. Now that foundation models synthesize responses rather than pointing users to external pages, SEO is being updated. Business leaders are adopting new strategies to influence the underlying training data and retrieval mechanisms of these AI systems, with the goal of keeping their brands visible and, just as importantly, accurately represented.

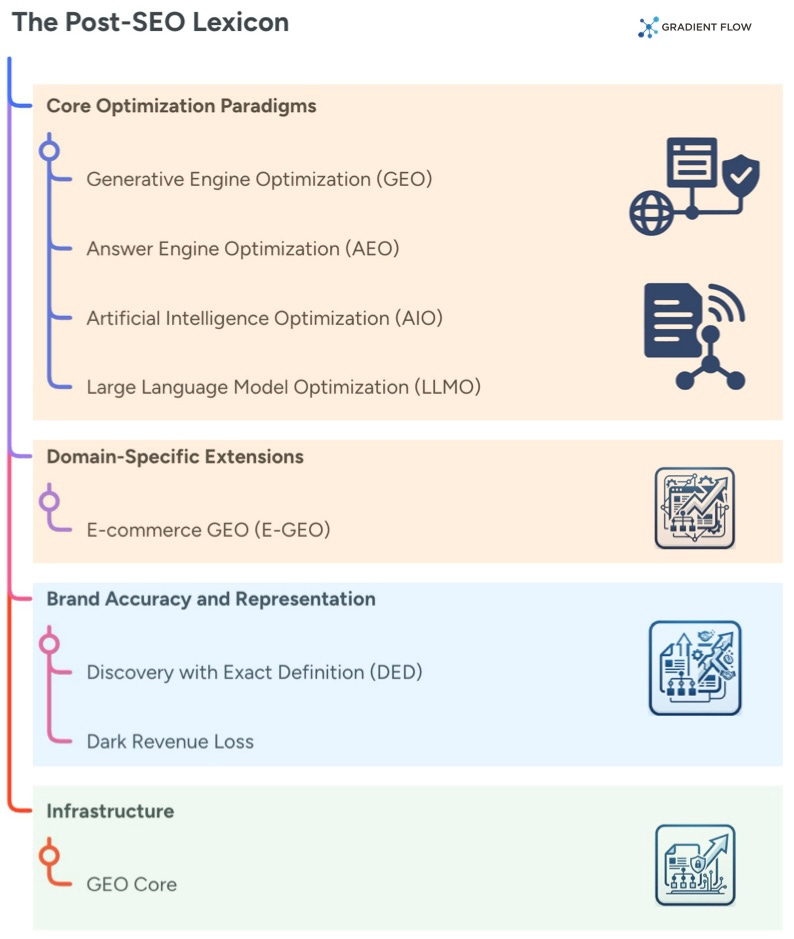

The shift in digital visibility centers around Generative Engine Optimization (GEO). Unlike traditional optimization that targets link rankings, this practice aims to influence the neural patterns and retrieval logic of foundation models. The goal is to ensure generative AI systems select and accurately cite a brand when synthesizing responses. Several related terms fall under this umbrella. Answer Engine Optimization (AEO) is the process of optimizing content to fit the conversational nature of modern AI, making it more likely to be featured in direct, synthesized answers. Artificial Intelligence Optimization (AIO) acts as a broader catchall label for these practices. Meanwhile, some marketers use Large Language Model Optimization (LLMO) to describe the exact same goal of shaping internal model weights and training data. These terms are often used interchangeably, which reflects how early-stage and unsettled the field still is.

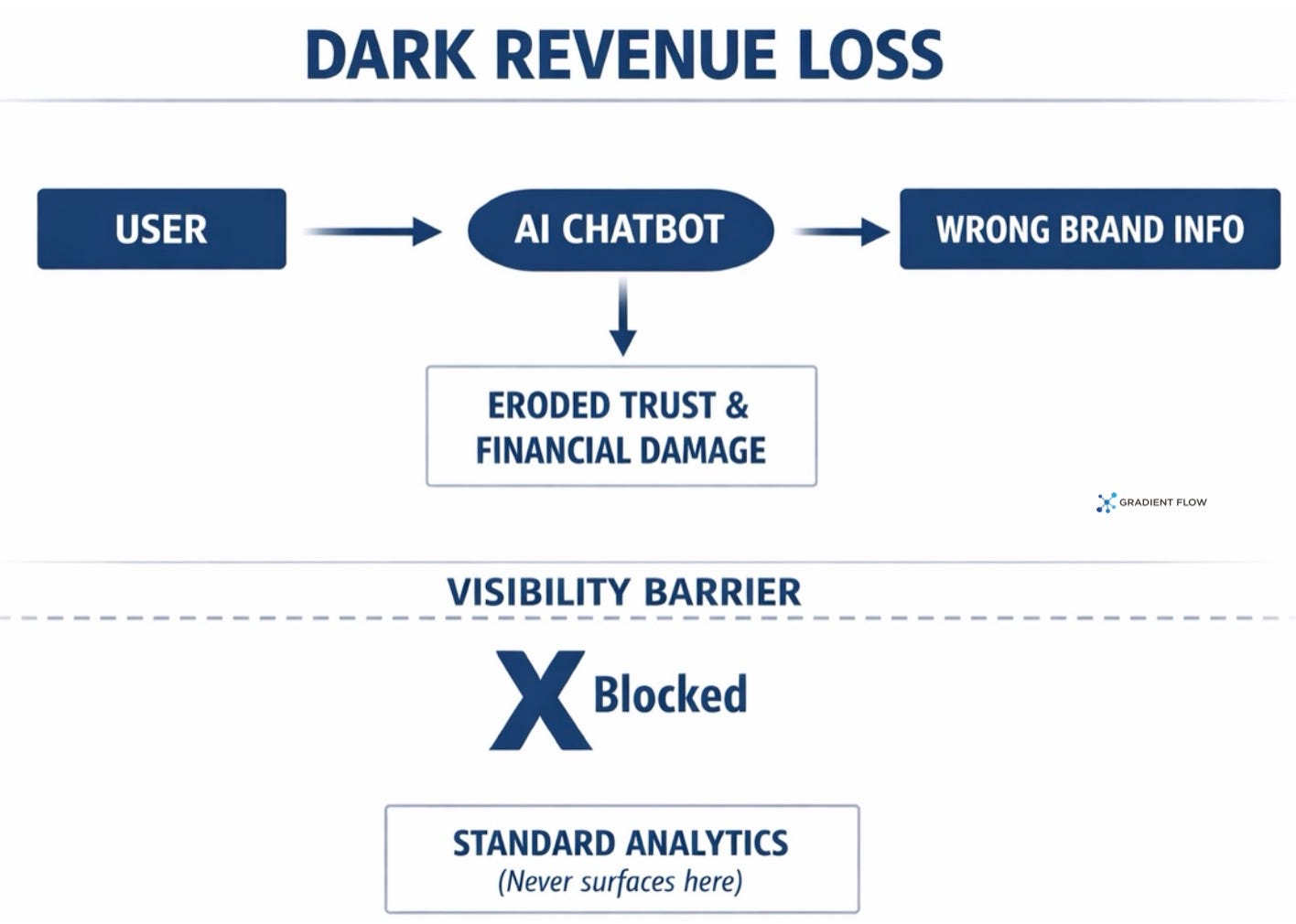

As these practices mature, they are fracturing into specialized domains and deeper conceptual frameworks. E-commerce GEO (E-GEO) explores whether product listings and reviews require distinct optimization tactics or if standard strategies work across all industries. Beyond mere visibility, practitioners are identifying new challenges like Discovery with Exact Definition. This concept highlights the gap between an AI retrieving brand information and actually representing it with contextual accuracy. A model can cite a company and still get it wrong. When that happens at scale, companies face what one researcher has called Dark Revenue Loss, the invisible financial damage and eroded customer trust that occurs entirely within AI-mediated conversations and never surfaces in standard analytics dashboards.

Addressing that invisible leakage requires more than content tweaks. A proposed architectural concept called GEO Core envisions a dedicated brand governance infrastructure layer, something analogous to a CRM but built to operate across retrieval-augmented generation (RAG) pipelines, chatbots, and AI agents, ensuring that what a model believes about a company matches what the company actually is. While I am not aware of any commercial implementations yet, this concept points toward a future where managing AI-mediated brand identity is treated as an enterprise infrastructure problem rather than a marketing one.

The AI Visibility Operations Playbook

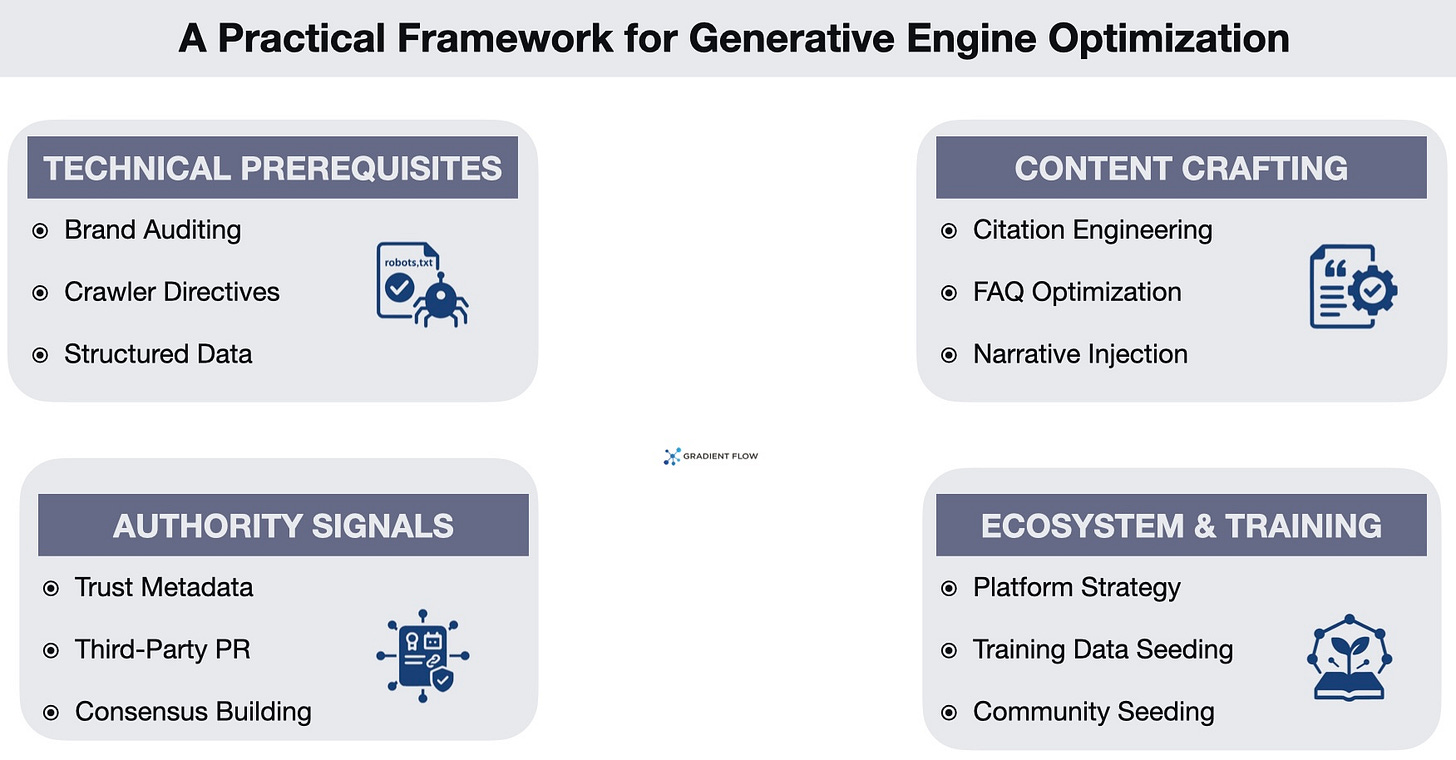

We are still in the early days of this shift, but practitioners are already deploying concrete strategies to adapt. Here are some of the techniques teams are using right now to ensure their brands surface accurately in AI-generated answers.

Crawler access configuration. Before an AI can summarize your content, it has to be able to read it. Many legacy website configurations accidentally block AI-specific web crawlers like the ones used by OpenAI or Google. Fixing this by updating a site’s robots file to explicitly allow these bots is the lowest-effort, highest-leverage action a team can take today, and it should happen before any other investment in this space.

AI brand auditing. Teams must establish a baseline by directly querying chatbots to see how their brand is currently represented in terms of accuracy, sentiment, and framing. Commercial visibility toolkits from major search marketing platforms now support this kind of tracking across citation frequency and competitive positioning. Without this baseline you are optimizing blind, and most organizations are still skipping it.

This isn’t a marketing problem anymore. Managing your brand in the AI era is an enterprise infrastructure challenge.

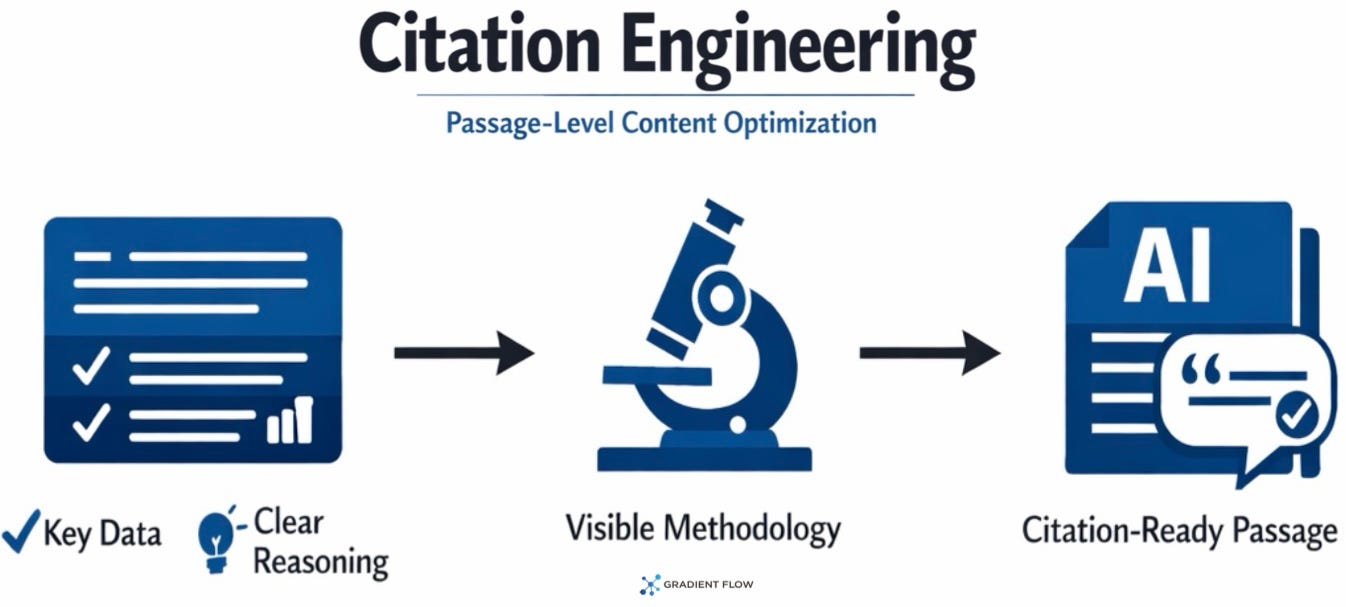

Citation engineering. This technique involves writing content in self-contained blocks of 30 to 60 words that pair a specific data point with clear reasoning, so an AI can extract the passage directly without needing to reinterpret it. Research demonstrated that this structural approach can increase visibility in generative results by up to 40 percent. The uncomfortable implication is that the best-structured source often beats the most accurate one in AI-mediated information delivery.

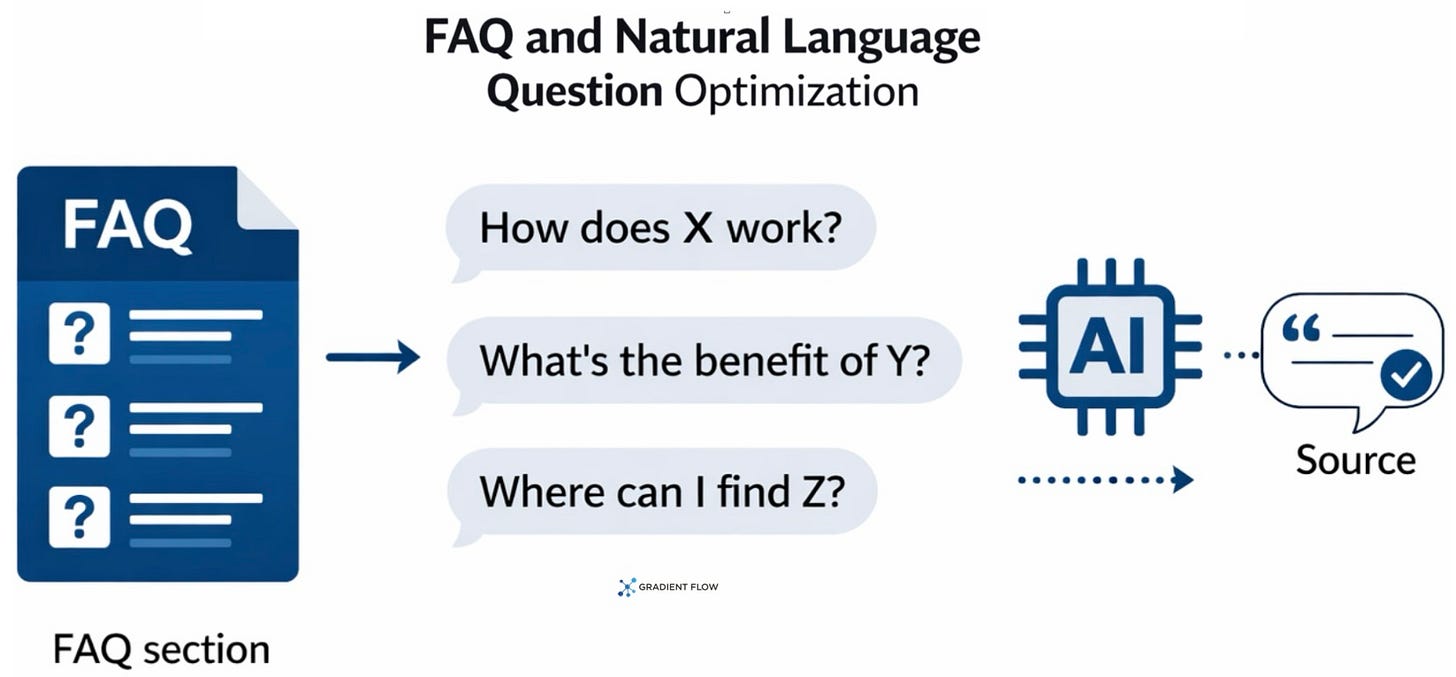

Natural language FAQ optimization. Users talk to chatbots differently than they type keywords into a search box, and content needs to reflect that conversational reality. By auditing the exact phrasing people use when prompting AI systems and creating matching question-and-answer pairs, teams make it trivially easy for the retrieval system to select their content. Adding just three to five of these natural language questions to an existing page can meaningfully increase the probability of a direct citation.

Machine-readable structured data. Implementing schema markup translates human-readable content into explicit metadata that AI systems can parse with precision, signaling what a page is about, who wrote it, and how it should be classified. This is technical hygiene that has been standard SEO practice for years, but its value is amplified in AI retrieval contexts because generative systems benefit even more from explicit structural signals than traditional search crawlers did. If your content management system supports it, there is no defensible reason not to implement it.

Platform-specific ecosystem tuning. Treating all AI models as a monolith is a reliable way to underperform, as one large financial technology company recently discovered firsthand. Each AI provider has distinct data dependencies: optimizing for one major search-backed AI requires strong traditional SEO fundamentals, while optimizing for a video-integrated AI model requires well-structured video transcripts and active business profiles. Teams should maintain separate playbooks for each platform rather than assuming a single strategy transfers across all of them.

Distributed authority building. AI models look for consensus across multiple independent sources to determine what is credible about a company. Earning mentions, reviews, and coverage across authoritative publications and industry outlets provides the corroborating signals that increase a model’s confidence in citing your brand. This reframes traditional public relations as a direct optimization lever for AI retrieval, not just a brand awareness exercise.

Long-term training data seeding. Because foundation models are trained on massive public datasets, brands that maintain a consistent, high-quality presence across the open web are more likely to be inherently known by the model before any retrieval even occurs. To influence foundation models, teams should actively participate in community forums and secure a presence in structured databases like Wikidata and Wikipedia. This is a multi-year strategy, not a quick win, but the compounding effects across successive model generations make early investment disproportionately valuable.

Vectors of AI-Mediated Brand Identity

The optimization surface itself is quickly expanding well beyond text on a webpage. Because major AI platforms increasingly pull from diverse source types, strategy must grow to match: video transcripts need to be structured and accurate, image metadata needs to be clean and descriptive, and audio content needs to be organized for machine ingestion rather than just human listening. At the same time, as autonomous AI agents begin executing vendor evaluations and purchasing decisions on behalf of users, the relevant optimization target shifts from web pages to the tool-calling interfaces and data layers those agents actually query. The goal is no longer just helping a chatbot describe your company accurately. It is ensuring an autonomous system can find, evaluate, and transact with your business without friction or distortion.

The window to build this foundation is closing faster than most organizations recognize. Analysts project a steep contraction in traditional search volume by 2026, which puts these new capabilities on a path from optional to mandatory in a short timeframe. The urgency compounds because newer AI models are frequently trained on outputs from prior generations, meaning early interventions reinforce themselves over time. Accurate brand representation established in today’s training data creates a self-reinforcing advantage that late movers will find genuinely difficult to close. Measurement is also maturing: tooling that explains why a retrieval system preferred one document over another is moving from research pipelines toward commercial platforms, which means the discipline is shifting from educated guesswork toward something testable and repeatable. Organizations that begin building these competencies now will be the ones that remain visible and accurately represented as the transition accelerates.

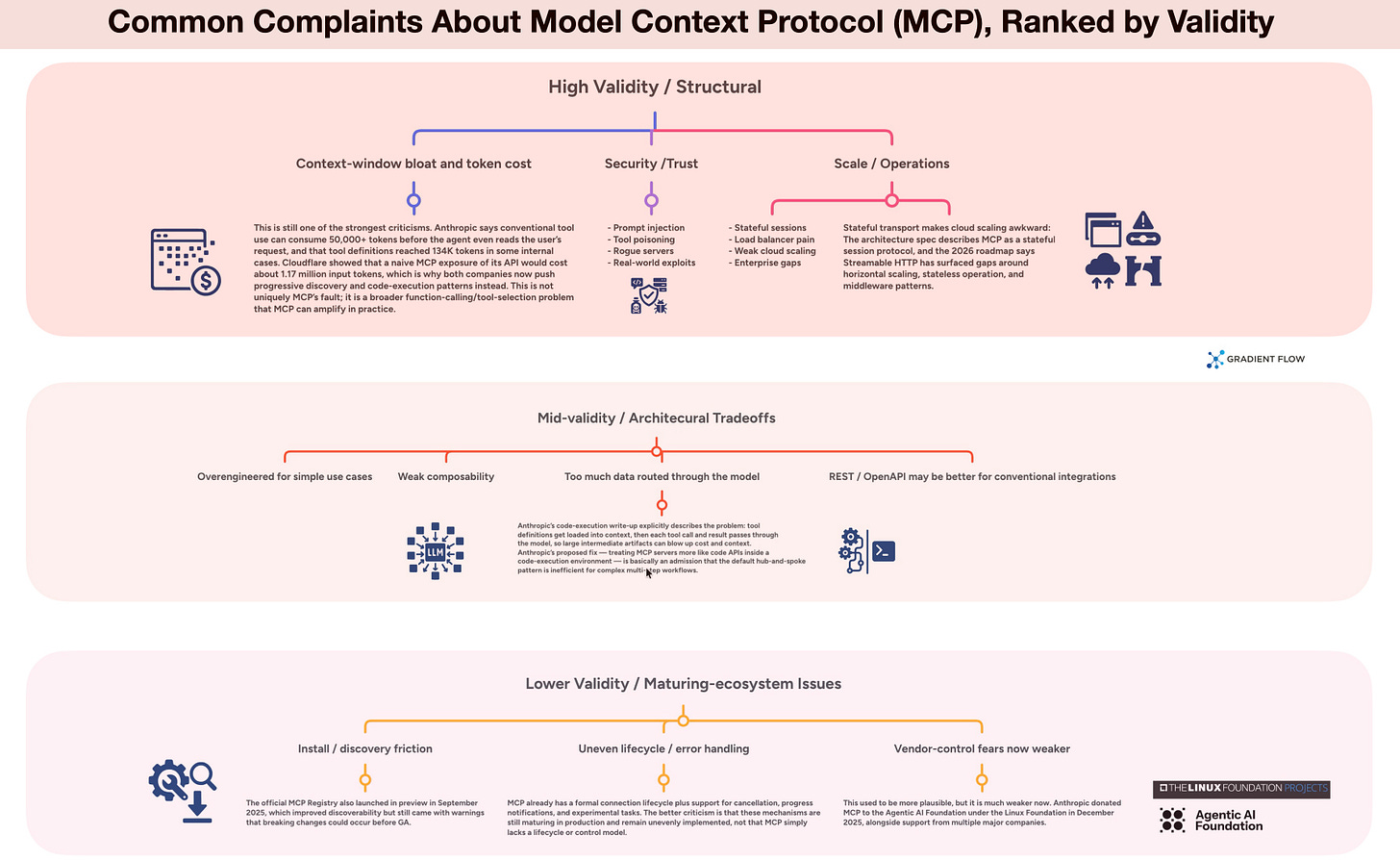

What Critics Get Right and Wrong About MCP

Ben Lorica edits Ethics.dev and the Gradient Flow newsletter, and he hosts the Data Exchange podcast. He helps organize the AI Conference and the AI Agent Conference, while also serving as the Strategic Content Chair for AI at the Linux Foundation. You can follow him on Linkedin, X, Mastodon, Reddit, Bluesky, YouTube, or TikTok. This newsletter is produced by Gradient Flow.